1. INTRODUCTION

2. RESEARCH TRENDS

3. ANALYSIS OF REQUIRED TECHNOLOGIES

3.1. Power Conversion System Modeling

3.2. Energy Storage System Modeling

3.3. Low Earth Orbit Satellite Attitude Prediction System Modeling

1. INTRODUCTION

For the mission design and system state assessment of Low Earth Orbit (LEO) satellites, continuous monitoring and accurate prediction are essential. However, conventional ground-based system state prediction methods for LEO satellites face limitations due to non-contact periods with ground stations, which hinder precise forecasts of the satellite’s state during the next operational phase. These constraints lead to conservative estimations of satellite system states, thereby reducing the accuracy of mission performance analysis and planning. Consequently, this can result in mission performance degradation, inefficient resource management, and increased operational costs.

Furthermore, the inability to effectively estimate the aging process of satellite systems over their operational lifetime poses challenges to the development of reliable dynamic simulators for mission optimization and stable operations. Particularly, the unexpected system anomalies or environmental changes during missions require sophisticated state prediction and operational optimization techniques to ensure timely responses. Therefore, in addition to ground-based data, precise predictive models leveraging real-time data collected during mission execution are necessary.

Recent studies have focused on optimizing satellite operations using artificial intelligence (AI) and data-driven approaches to overcome these limitations. AI-based predictive models can anticipate state changes even when the satellite is not connected to a ground station, enabling more precise analysis compared to traditional state estimation methods. In particular, AI-based predictive models provide more accurate state predictions than conventional approaches and have demonstrated effective performance in applications such as anomaly detection in power systems, attitude control optimization, and battery degradation prediction using machine learning techniques [1]. Additionally, real-time analysis of vast telemetry data allows for early detection of performance degradation or anomalies in satellites, reducing the risk of mission failure and ensuring stable operations. As satellite systems become increasingly complex and as diverse mission requirements emerge, the necessity of replacing traditional rule-based approaches with data-driven dynamic modeling techniques is growing.

Accordingly, this study analyzes recent research trends and identifies key modeling techniques to maximize the efficiency of LEO satellite operations. In particular, it highlights the necessity of AI-based predictive models to overcome the limitations of traditional operational methods and discusses data-driven operational strategies to enhance the reliability of satellite missions. Additionally, this study proposes modeling approaches that consider factors such as satellite power systems, battery performance, and attitude control to provide more effective optimization strategies. Through these efforts, we aim to improve the reliability and autonomy of satellite operations, ultimately contributing to maximizing the success rate of global space missions in the long term.

2. RESEARCH TRENDS

In the domestic satellite system, a standardized process for modeling and analyzing variations in key on-orbit performance requirements has yet to be established. In particular, there is a lack of modeling that accounts for system degradation. Consequently, the mission design of LEO satellites is based on predefined mission constraints set during the satellite development phase, such as mission lifetime, maneuver time, and imaging intervals. This approach makes it challenging to maximize mission execution frequency, observation coverage, and optimal mission allocation.

Additionally, to prevent unexpected malfunctions, such as the activation of satellite safe mode, there is a tendency to adopt conservative mission designs. While attempts have been made to utilize past satellite operational data for mission planning, the nonlinear characteristics of satellite time-series data often lead to reliance on developers’ experience, making it difficult to develop and apply generalized models.

Internationally, organizations responsible for satellite operations, such as SAFT and NASA, have conducted research on orbital power management and degradation. However, these technologies are classified as core technologies, restricting public access to related information. Additionally, algorithms used to assess mission feasibility based on variations in maneuvering performance over the mission period, maneuvering time and intervals, and imaging modes and regions are also protected as proprietary technologies, limiting access to such information.

Even among international research institutions, studies on degradation over the mission period and predictive models for future states in non-contact periods remain within early stages, with no significant development efforts yet underway. Regarding performance estimation over the operational period, academic research has primarily focused on modeling and control of commercial ground-based systems for practical applications, while research and development in the aerospace sector remain insufficient. Therefore, the approach of designing missions based on real-time estimated performance variations in orbit, rather than predefined requirements set during the satellite development phase, is still in its nascent stage both domestically and internationally.

The mission design of LEO satellites is closely linked not only to the satellite’s orbital and hardware design but also to the design of ground-based systems, including communication with ground stations and data management. Recently, advancements have focused on improving efficiency and reliability through high-level technologies and optimization techniques, with on-orbit state estimation emerging as a critical factor [2].

One of the most important aspects of LEO satellite mission design is the orbital design. The satellite’s orbit plays a crucial role in target area observation and communication support, with factors such as ground track repeat cycles serving as key considerations. To address this, nonlinear simulation-based numerical optimization techniques have been introduced. Commercial software such as STK and MATLAB is commonly used to simulate orbits and derive optimal orbital parameters, demonstrating high accuracy. Consequently, orbit prediction errors in actual mission design are managed to a level that does not significantly impact mission execution.

On the other hand, the ground communication system is another key factor in the mission design of LEO satellites. Due to the nature of their orbits, LEO satellites have a high relative velocity with respect to the Earth and a limited connection time with ground stations. To overcome these challenges, efficient ground station network deployment and high-speed data transmission technologies utilizing Ku- and Ka-band frequencies have been developed. Recently, high-speed data link systems supporting satellite-to-ground communication and the use of mobile ground stations have been actively researched. These technologies can be particularly useful for missions requiring real-time data transmission in emergency situations.

However, the location and number of ground stations significantly impact operational costs, while factors such as radio frequency interference and weather conditions can degrade communication quality. Moreover, the implementation of these systems is limited for satellites that have already been launched, restricting their application to existing satellites.

In ground-based mission design, data management including the processing and storage of satellite data is also essential. LEO satellites generate high-resolution data and large volume streaming data, necessitating mission designs that enable efficient data transmission within the same orbital pass.

Another key aspect of mission design is the autonomy of satellite systems. Recent research has explored designing satellites with the capability to autonomously perform missions and respond to issues by making independent decisions. This is achieved through the application of artificial intelligence (AI) and machine learning technologies, which can be utilized for satellite data processing, mission planning optimization, and system state monitoring. However, in satellite systems, algorithms sophisticated enough for mission design have not yet been fully developed, and considerations regarding the through-put of onboard systems are necessary. As a result, research in this area is still in its early stages. These technologies are also difficult to apply to satellites that have already been launched.

Ground-based mission design for LEO satellites also involves various challenges. First, establishing and maintaining a ground station network requires substantial costs, particularly since multiple ground stations are needed to cover a wide area. Furthermore, communication between ground stations and satellites can be affected by radio frequency interference and weather conditions, potentially reducing communication stability.

As a solution to overcome these limitations, the optimal placement of ground stations and the use of mobile ground stations have been proposed. These approaches can extend communication time and enhance operational flexibility. However, the relevant technologies are still in the developmental phase and are currently difficult to implement in satellite operations.

Additionally, efforts are being made to address these challenges through the design and enhancement of telemetry systems [2]. The design of telemetry data packages for the core systems of LEO satellites is a crucial task to ensure stable satellite operations and mission success. Telemetry data is used to monitor the satellite’s status and performance in real time, including key parameters such as power system status, attitude control system information, temperature conditions, sensor outputs, and hardware anomalies. By appropriately designing the frequency and bit size of data packages, efficient and reliable data transmission can be achieved even within the constraints of limited bandwidth and energy resources.

To improve reliability, data packages are designed with error detection and correction techniques. Typically, they include checksums or error correction codes, which help prevent data loss or corruption during transmission and support data recovery at the ground station. While these error detection and correction bits affect the overall size of the data package, they are essential for ensuring data integrity. This functionality becomes even more critical in environments with unstable communication conditions or low signal-to-noise ratios (SNR).

Additionally, the visibility time between the ground station and the satellite is a critical factor in telemetry data package design. LEO satellites orbit the Earth approximately every 90 to 120 minutes, with limited connection time to ground stations over specific regions. To efficiently transmit data under these constraints, a data prioritization approach is employed. High-priority data, such as power system and attitude control information, is transmitted first within the available visibility window, while lower-priority data is either stored until the next connection with the ground station or transmitted upon request. Furthermore, data compression techniques are applied to maximize the amount of data transmitted within the limited communication time.

The transmission of telemetry data is also closely linked to satellite energy resource management. Since satellites operate with limited power resources, minimizing energy consumption during data transmission is essential.

The design of data packages also focuses on optimizing bandwidth utilization. When communication bandwidth is limited, reducing data package size, prioritizing data transmission, and applying compression techniques enhances the efficiency. Conversely, when sufficient bandwidth is available, larger data packages can be transmitted to minimize overhead and maximize transmission efficiency. Throughout this design process, data importance and mission requirements are comprehensively considered, dynamically adjusting package size and transmission intervals to achieve optimal performance.

Therefore, the design of telemetry data packages for LEO satellite core system information is based on data importance, variability characteristics, communication constraints, and energy efficiency. The transmission frequency of data packages is flexibly configured according to the state of the satellite system and the rate of change, ensuring a balance between high-frequency and low-frequency data transmission. The number of bits is dynamically allocated based on data accuracy requirements and bandwidth limitations, while error detection and correction mechanisms are incorporated to ensure data reliability.

An optimized design enables stable satellite operation and effective data utilization at ground stations, ultimately increasing the probability of mission success. This design approach supports efficient and reliable data transmission even under resource-constrained environments, contributing to maximizing satellite system performance.

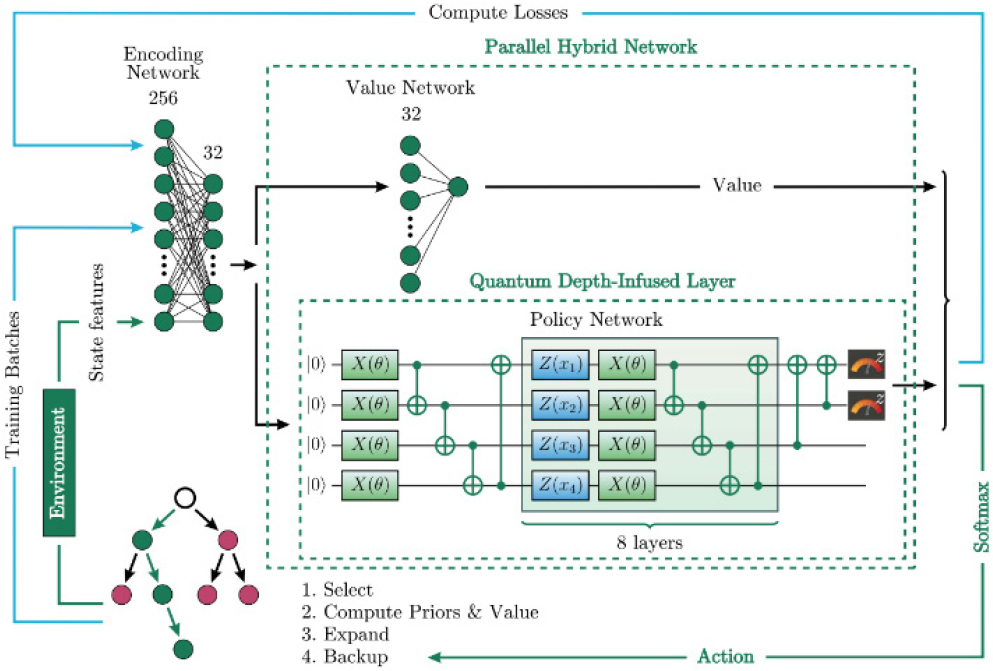

As shown in Fig. 1, a novel approach utilizing artificial intelligence has been proposed to optimize mission planning through multi-satellite regional mapping. This approach aims to address the limitations of parameter settings and the computational cost issues associated with traditional genetic algorithms while optimizing the balance between satellite resources and observation requirements as a key research focus.

The satellite mission planning problem is defined as a complex optimization problem that must consider multiple conflicting objectives. These objectives include maximizing coverage of observation areas, optimizing satellite energy and storage capacity, and improving the efficiency of observation data transmission. However, because these parameters are typically determined through empirical methods, ensuring optimal performance for specific problems is challenging. Additionally, fixed parameter settings have limitations in adapting to diverse environmental conditions and dynamic situations. To improve optimization performance, approaches such as increasing the number of generations or introducing more complex strategies are often used, but these methods result in higher computational costs.

To address these challenges, as shown in Fig. 2, approaches utilizing Deep Reinforcement Learning (DRL) have been proposed to automatically adjust parameters at each evolutionary generation.

This algorithm leverages the learning capability of reinforcement learning during the evolutionary process to learn the relationship between parameters and objective function states, allowing for the selection of optimal parameters. The training and learning process is designed using simplified data derived from test functions rather than directly utilizing complex satellite data and orbital information. This approach enhances training speed and reduces the computational complexity of the algorithm.

A neural network is used to learn the relationship between population states and evolutionary parameters, and the trained model can dynamically set parameters for each generation in real time. This process increases the flexibility of the algorithm and significantly reduces computational costs. MOLEA, by integrating deep reinforcement learning with a multi-objective evolutionary algorithm, greatly enhances the efficiency of satellite mission planning. However, like other algorithms, it also has limitations, including simplifications in modeling the constraints of satellite mission design and challenges in accurately predicting the future state of satellites.

Additionally, efforts have been made to improve the efficiency and real-time performance of satellite mission planning by utilizing algorithms such as Monte Carlo Tree Search (MCTS). Traditional satellite mission planning methods involve generating plans at ground stations and transmitting them to satellites. While this approach is simple and stable, it has limitations in providing immediate responsiveness and optimization performance in modern environments where the number of observation targets is increasing and user demands are becoming more complex. Since conventional methods operate based on predefined plans, they struggle to handle dynamic and complex requirements in real time and face difficulties in efficiently utilizing satellite resources and time.

Recently, various algorithms have been introduced to automate and optimize satellite mission planning [5]. In particular, AI-based algorithms optimize observation targets and enable satellites to autonomously execute missions without ground station intervention. However, existing algorithms have shown limitations in balancing profitability (importance and value of observation targets) and real-time performance (computation speed and feasibility of execution). To address this issue, research is being conducted on incorporating the State Uncertainty Network (State-UN) to accelerate search processes and to maximize efficiency in node selection.

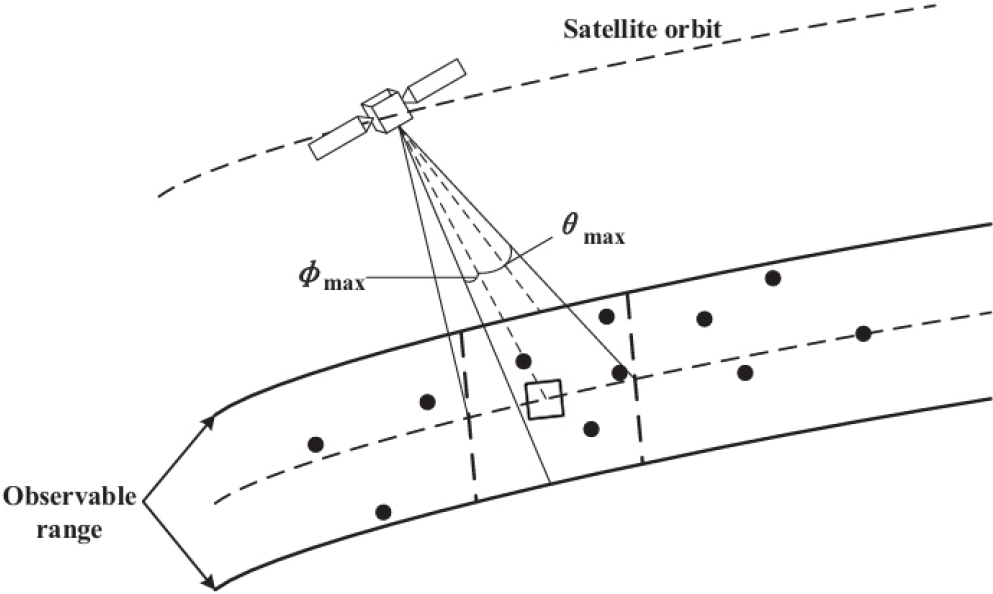

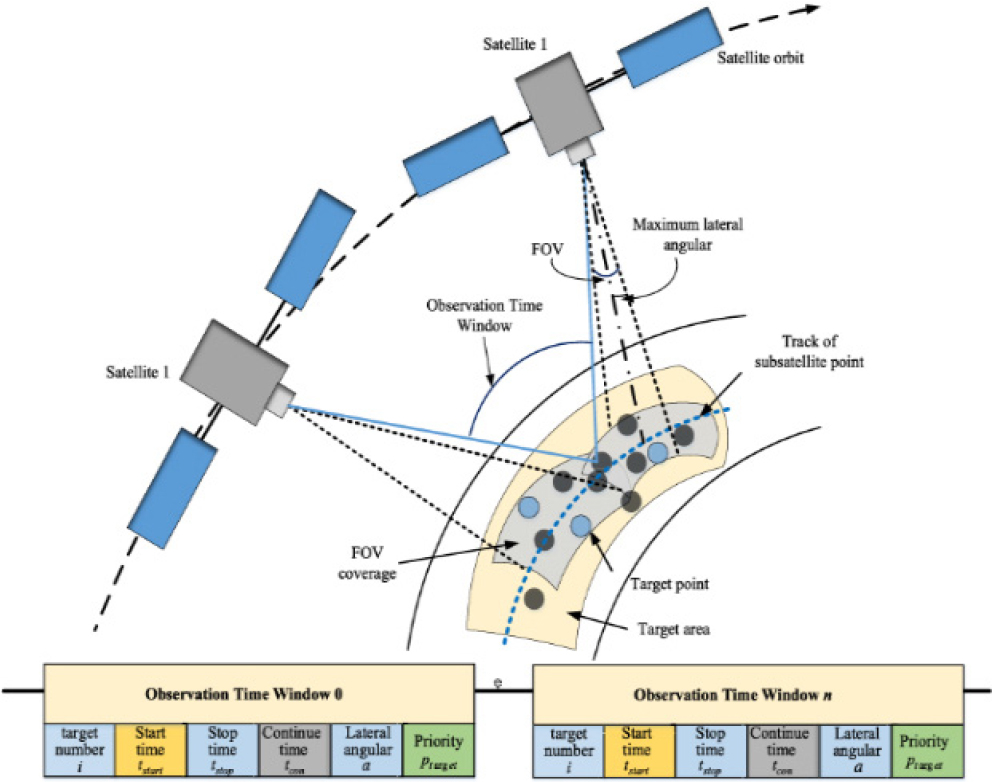

This requires modeling orbital dynamics and constraints, as shown in Fig. 3.

The mission planning model must calculate observation feasibility and transition times, including the Visibility Time Window (VTW) and attitude transition time. The VTW represents the time period during which a satellite can communicate with or observe a specific target, while the attitude transition time refers to the time required for the satellite to change its observation direction. This model integrates multiple constraints such as attitude adjustments, storage capacity, and energy consumption to develop a more sophisticated mission plan. Therefore, accurate modeling is essential for a precise mission allocation.

Monte Carlo Tree Search (MCTS) is an algorithm primarily used in game theory and is effective in making optimal decisions under uncertain conditions. This network evaluates state similarity and uncertainty to shorten the search process. When state uncertainty is low, the search is terminated early, and the result is determined. Additionally, state symmetry is leveraged to reduce the search space and minimize redundant computations. This approach efficiently utilizes computational resources, significantly reducing search time and resource consumption. By implementing this method, complex mission planning problems can be effectively addressed, satellite resources can be utilized efficiently, and high performance can be achieved across various application scenarios.

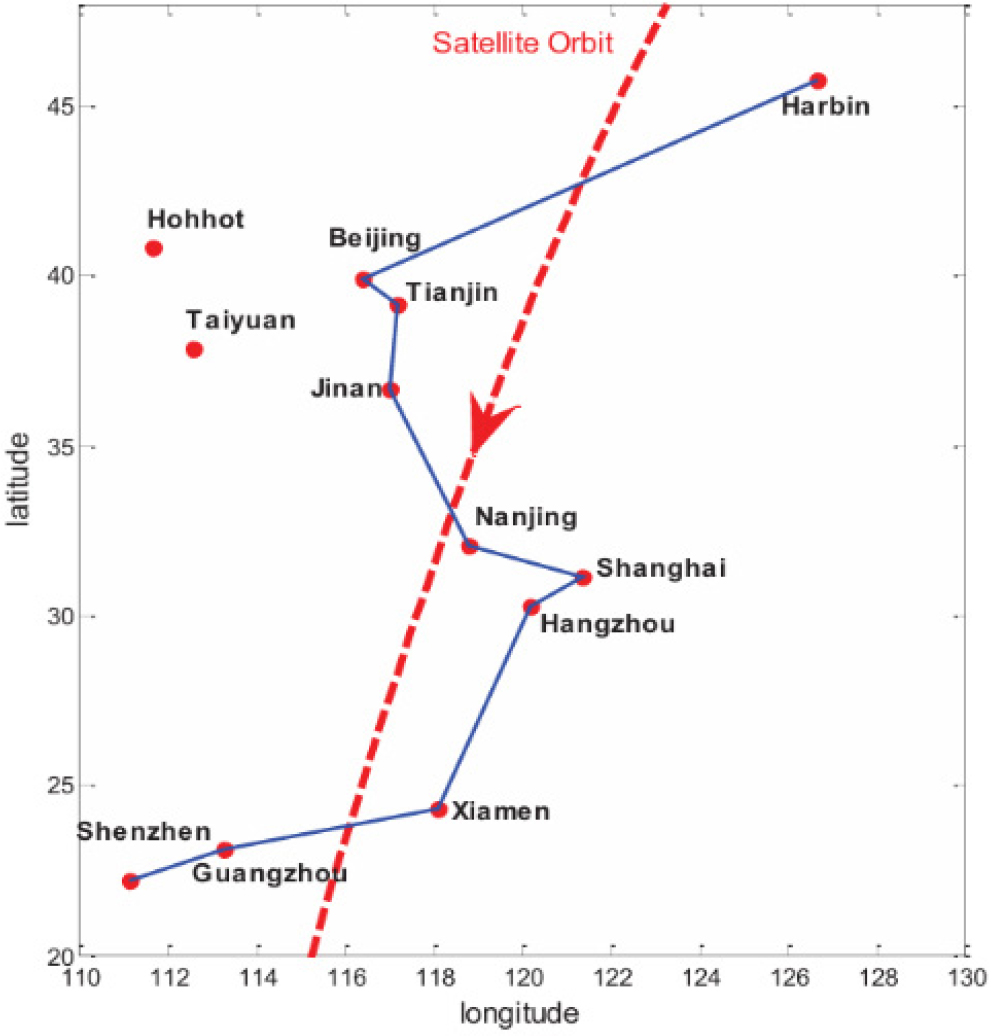

Artificial intelligence-based models have been proposed to solve the multi-satellite mission planning problem. Multi-satellite mission planning involves efficiently utilizing multiple satellites to observe various targets within a limited timeframe, making it a highly complex optimization problem. To address this challenge, adaptive mechanisms of genetic algorithms, as well as selection, crossover, and mutation operations, were employed, as shown in Fig. 4. Additionally, efforts were made to improve the efficiency of the algorithm by introducing an adaptive parameter adjustment method [6]. For this purpose, encoding and decoding methods were designed to represent each satellite and observation task (start time, end time, observation sequence), and optimization was performed through adaptive crossover and mutation operations.

To validate this approach, it was applied to the multi-target mission planning of the Agile Earth Observing Satellite (AEOS), which possesses high-speed three-axis attitude maneuvering capabilities. AEOS offers a wider observation range and enhanced observation capabilities compared to conventional satellites, but this also increases the complexity of mission planning. The AEOS mission planning problem is classified as an NP-hard problem with polynomial-time complexity, involving a vast search space and intricate constraints, demanding significant computational resources for resolution.

Previous studies have proposed various intelligent optimization methods, including graph theory, integer programming, dynamic programming, genetic algorithms, and rolling planning. However, existing models have limitations in fully accounting for satellite attitude changes and energy consumption, which restricts their applicability in real-world scenarios.

Additionally, as shown in Fig. 5, various methods have been proposed to optimize the mission scheduling of a single satellite. Traditional scheduling approaches plan missions based on fixed or semi-fixed priority schemes while considering multiple constraints [7]. However, these methods have limitations in handling the complexity of dynamically changing satellite networks. When mission priorities shift in real time or resource constraints arise, conventional approaches become inefficient, leading to resource wastage and suboptimal performance.

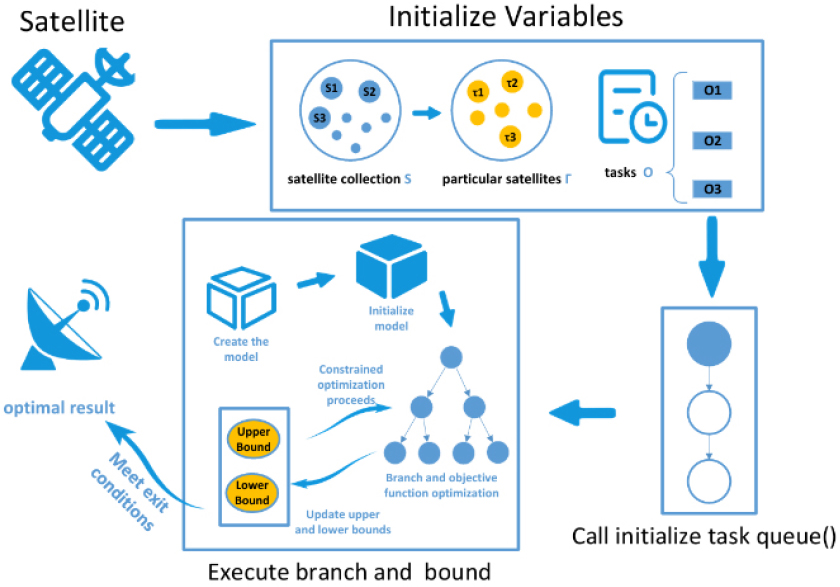

To address these challenges, dynamic scheduling models utilizing the Branch and Bound (BnB) algorithm, as shown in Fig. 6, have been proposed. This algorithm systematically partitions the search space and eliminates inefficient paths early in the process, thereby optimizing computational resource usage. Furthermore, it incorporates a mechanism for real-time task priority adjustment, enabling rapid adaptation to dynamic environmental changes. Specifically, it considers various factors such as task complexity, resource requirements, and execution time to derive the optimal execution sequence.

The core design of this approach begins with decomposing tasks into smaller sub-tasks. Each sub-task is managed based on attributes such as priority, execution time, and resource requirements. Subsequently, a priority adjustment mechanism dynamically modifies task priorities in real-time, considering environmental changes and available resource conditions. Based on these adjusted priorities, the BnB algorithm performs search and pruning operations, eliminating inefficient paths while exploring optimal ones. Finally, the optimized sub-tasks are integrated to generate the overall schedule, maximizing resource utilization and enhancing execution efficiency.

Furthermore, research on Multi-Satellite Imaging Mission Planning (MSIMP) has been conducted to optimize the allocation of observation targets using multiple satellites within limited resources [8]. This problem requires consideration of various observation requirements, orbital constraints, and resource limitations. Previous studies have attempted to solve MSIMP using approaches such as integer programming, constraint satisfaction problems, deterministic algorithms, heuristic algorithms, and artificial intelligence-based optimization algorithms. To this end, mission priority assessment methods have been employed, taking into account factors such as mission priority, mission type, urgency level, and user preferences, while adaptively selecting mission allocation frameworks.

Additionally, as shown in Fig. 7, bilevel programming models have been proposed to address the multi-satellite cooperative observation mission planning problem [9]. The bilevel programming model consists of two main stages. The upper level focuses on the mission allocation problem, where the objective is to assign missions to suitable satellites to maximize mission completion rates and resource balance. The lower level addresses the resource scheduling problem, optimizing the allocation of each satellite’s resources based on the mission assignments determined at the upper level.

This process aims to optimize mission completion rates, mission profitability, and resource load balancing by transforming the problem into a weighted single-objective optimization framework. At the upper level, it is verified to see whether each mission aligns with the satellite’s capabilities and whether the observation resolution and the execution time constraints are met. At the lower level, resource allocation is performed while considering satellite availability and preparation times.

However, current models do not fully incorporate real-world constraints in mission design. They especially do not account for factors such as data transmission and satellite state estimation. As a result, while these models may be useful for ideal satellite operations, their applicability to already deployed satellites remains limited.

Therefore, while these attempts contribute to improving the efficiency of satellite mission planning, they rely on accurate satellite state predictions. Consequently, inaccurate state predictions make it difficult to directly apply these methods to mission execution.

Additionally, ground-based mission design for LEO satellites plays a crucial role in satellite performance and mission success. It requires an integrated consideration of various factors, including orbit design, communication system development, data management, and automation technologies. Recent advancements in design methodologies and technological developments have been focused on enhancing the efficiency and reliability of these elements.

However, for LEO satellites, the limited ground contact periods make accurate future state prediction challenging. Existing research has been conducted based on the assumption that satellite telemetry can be predicted accurately within a certain range, but physical and environmental limitations still persist. Therefore, continuous technological advancements are required to mitigate these challenges. As a result, there is a growing need for algorithms and technologies that predict the future state of satellites to enhance mission design and execution [10].

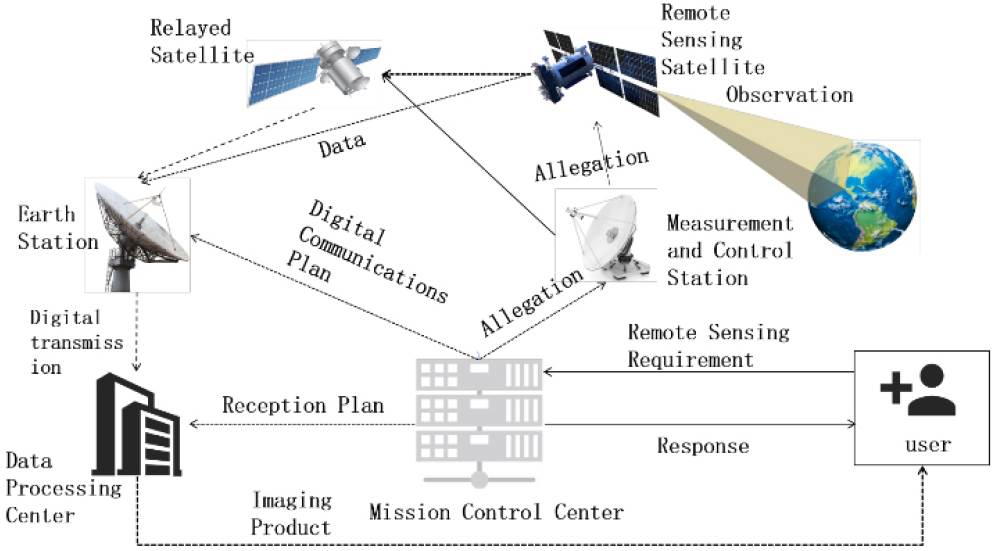

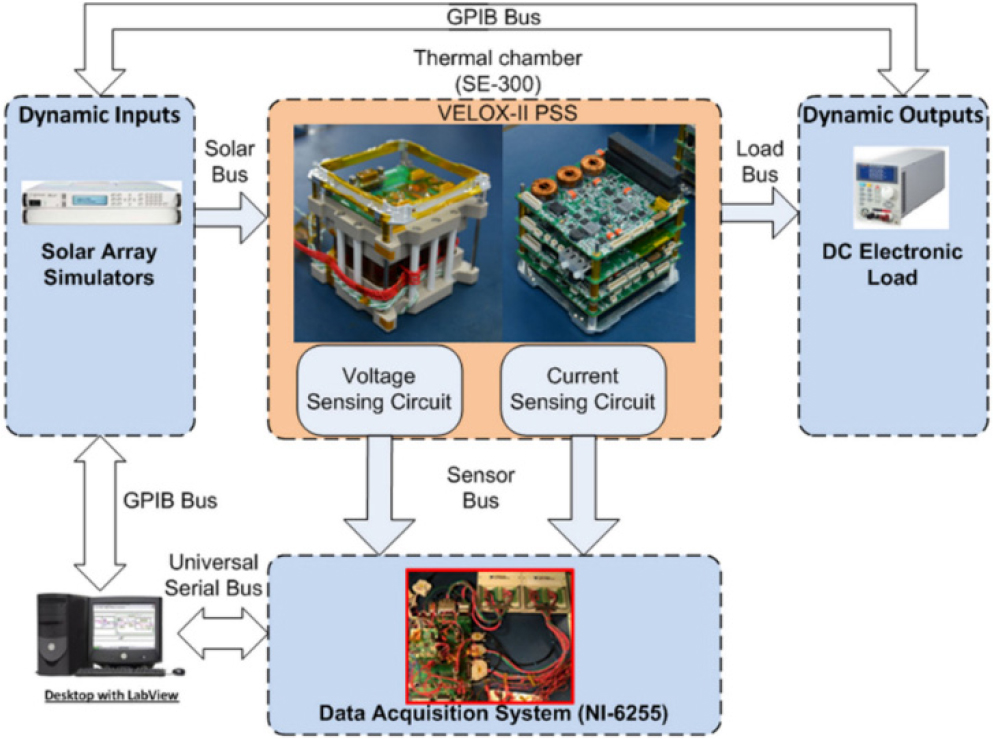

Further research has been conducted, as shown in Fig. 8, to propose a new approach for optimizing the Electrical Power System (EPS) of LEO satellites using artificial intelligence.

LEO satellites are becoming increasingly important across various applications due to their miniaturized design and cost-effectiveness. Consequently, an appropriate Electrical Power System (EPS) design is essential. The EPS is responsible for power generation, management, storage, control, protection, and distribution, making it one of the key factors that determine the overall mission success of a satellite.

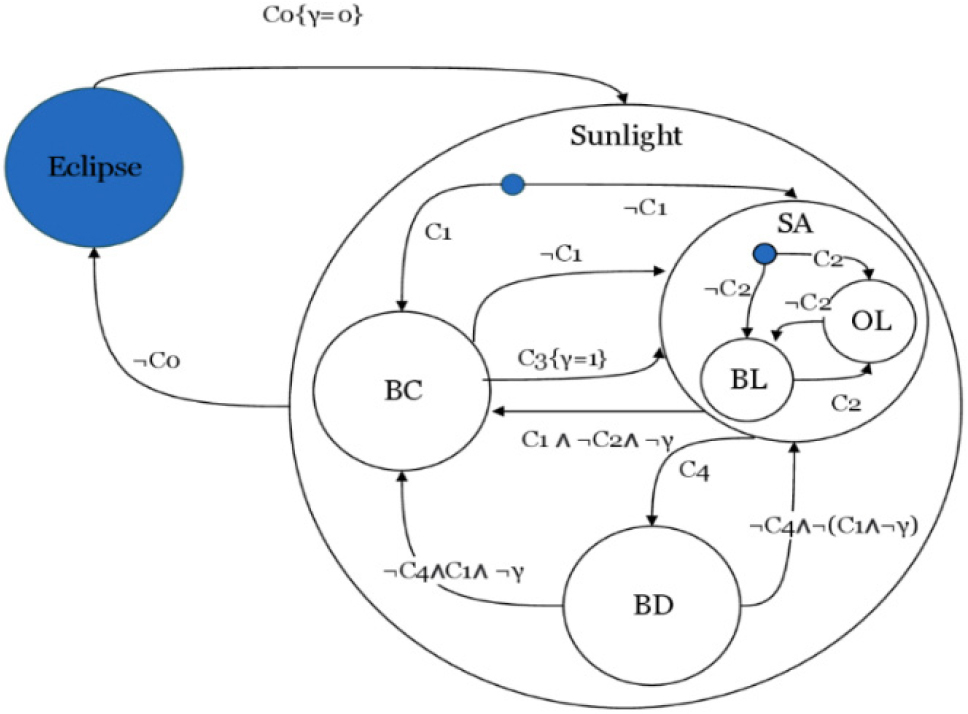

EPS design is customized based on mission requirements and is generally classified as Direct Energy Transfer (DET) or Peak Power Tracking (PPT) methods. In this study, a Finite-State Supervisor (FSS) was considered to efficiently manage the interactions between solar panels, batteries, and loads. This system implements Maximum Power Point Tracking (MPPT) for solar panels while optimizing battery charging and discharging processes, ensuring compliance with the fundamental constraints of load servicing. The supervisor receives inputs such as battery status, load power demands, temperature, and solar irradiance to transition between states and control system operations. This enables stable and efficient power management under diverse operational conditions.

By optimizing the size of solar panels and batteries, the EPS system design can be further enhanced. The optimization process maximizes system efficiency and reliability by considering battery charging current and initial state of charge (SOC). Additionally, during the battery charging process, factors such as charging time and current profiles can be optimized. To achieve this, various operating modes of the system are defined, and appropriate control variables are set for each mode. The duty cycle of the DC-DC converter and battery charging current are regulated within each mode, and if necessary, a bleeder circuit is activated to manage excess power. This approach enables optimized operation of solar panels and batteries while fulfilling load requirements and minimizing energy losses.

Several methodologies have been proposed to optimize the size of solar panels and batteries through these processes. The optimization process involves exploring feasible battery charging currents and initial SOC values using a mesh grid method to determine the optimal parameters. Simulations are then conducted based on these parameters to derive the optimal system size and tuning values. However, this approach mainly provides a design framework for power system planning and management and cannot be directly applied to mission design.

Furthermore, research has been conducted on utilizing machine learning (ML) for channel prediction in optical-based LEO satellite communication systems to implement adaptive transmission rates and power control [11]. Free-space optical (FSO) communication offers high data transmission rates and wide coverage; however, its performance can degrade due to atmospheric turbulence and alignment errors. To overcome these limitations, ML-based channel prediction methods have been introduced to design adaptive transmission rate and power control mechanisms.

Existing studies on LEO satellite systems have primarily focused on adjusting data rates based on fixed transmission power, leading to possible low energy efficiency. To address this, research has combined transmission rate adjustment with transmission power control for more efficient energy management. However, similar to EPS optimization, this approach is not readily applicable to already deployed satellites.

Due to the limited accessibility of satellite systems, ground-based mission design has primarily relied on power budget analysis, despite the importance of future state prediction for satellite mission planning. Power budget analysis is a technique used during the satellite design phase, and its prediction accuracy varies depending on heritage, the designer’s expertise, and underlying assumptions [12]. This method is mainly utilized to determine the energy balance and capacity requirements of a satellite by analyzing its generated and consumed power. For this analysis, the power requirements of each unit are documented, and the required power consumption is categorized based on the mission. However, power consumption includes factors such as heaters for temperature control, power transmission efficiency, and duty cycles, which often result in discrepancies between predicted and actual power consumption in orbit. Similarly, power generation is estimated based on assumptions about the satellite’s orbit, attitude, and temperature, leading to potential deviations from actual values. In power budget-based analysis, assumptions are allocated based on satellite development heritage, which directly affects prediction accuracy.

As an alternative, power analysis that incorporates non-contact periods and the expansion of probability-based prediction techniques commonly used in ground-based applications can be considered. One approach is to use the Seasonal Autoregressive Integrated Moving Average (SARIMA) model for future state prediction. The Autoregressive Integrated Moving Average (ARIMA) model combines Auto-Regressive (AR) and Moving Average (MA) components to capture the probabilistic structure of observations over time.

The ARIMA model is characterized by three key parameters: autoregressive order (p), differencing order (d), and moving average order (q), enabling it to generate predictions based solely on past observations and error terms. Additionally, it assigns greater weight to more recent observations, making it well-suited for short-term forecasting. However, ARIMA models cannot effectively account for the seasonality or periodicity of time-series data.

To address this limitation, the SARIMA model extends ARIMA by incorporating Seasonal Auto-Regressive (SAR) and Seasonal Moving Average (SMA) components, making it a more suitable predictive technique for handling seasonal patterns [13,14].

A time series represented by the SARIMA model has a seasonal period , along with non-seasonal and seasonal autoregressive orders , non-seasonal and seasonal differencing orders , and non-seasonal and seasonal moving average orders . It is expressed as . The corresponding representation is given in Eq. (1) below.

Therefore, the Seasonal Autoregressive Integrated Moving Average (SARIMA)-based modeling approach has been widely used for the prediction of power generation and consumption of terrestrial solar cell systems, demonstrating high prediction accuracy. The time-series data of power generation and consumption in satellite systems exhibit causal relationships over time, similar to those observed in ground-based systems, and show variations based on operational periods and seasonal changes. However, additional factors such as mission variations and attitude adjustments make it challenging to accurately predict power generation and consumption using probability-based models alone.

In particular, LEO satellites experience variations in power generation and consumption due to changes in distance from the Sun and temperature fluctuations across different seasons. Additionally, load fluctuations during mission execution can exceed 100% depending on the design, making it difficult to ensure an accurate estimation of maximum battery consumption. As a result, probability-based modeling also has inherent limitations.

Finally, beyond ground-based battery state estimation, research has been conducted on onboard battery state estimation [15]. The focus of onboard prediction has primarily been on reducing satellite computational load while ensuring estimation accuracy. As shown in Fig. 9, onboard state estimation research has mainly explored methods to reduce memory and CPU loads, as well as mathematical model-based approaches. These techniques have been primarily developed for fault management in satellite power systems.

However, onboard-based research does not transmit information from non-contact sections to the ground, making it unsuitable for mission design but useful for error management design.

Next, artificial intelligence-based research has been conducted to analyze satellite performance and predict future states using satellite telemetry data [16]. Telemetry data enables real-time monitoring of a satellite’s status, providing critical information for predicting potential failures and improving operational efficiency. Additionally, nonlinear modeling techniques are required to effectively process and utilize this data for prediction since telemetry data is structured in a time-series format.

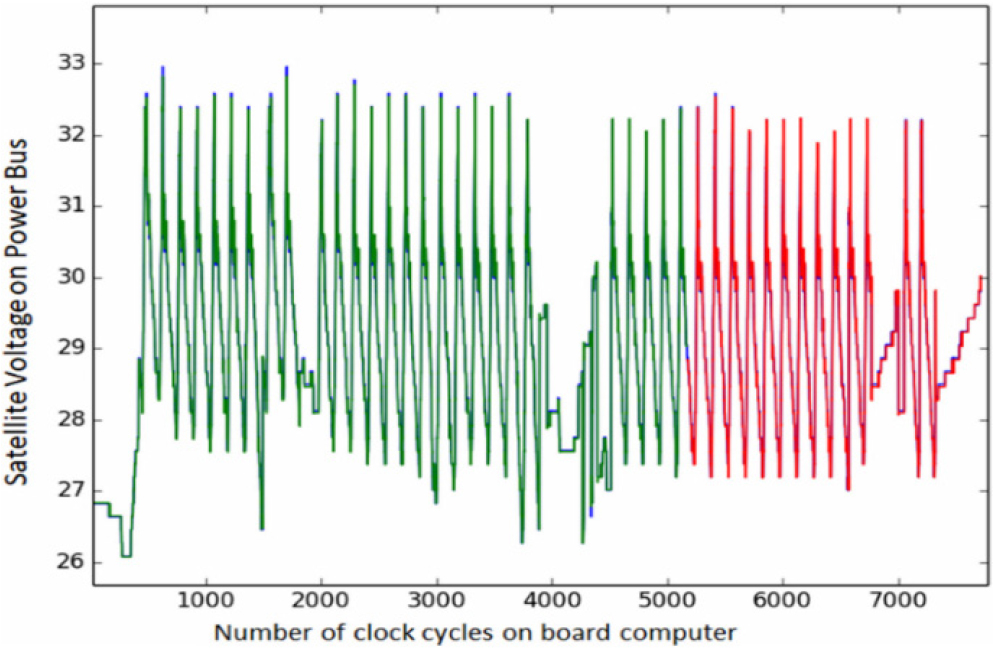

As shown in Fig. 10, this study evaluates the performance of a nonlinear model based on data collected from the EGYPTSAT-1 satellite and aims to identify the optimal algorithm applicable to satellite operations.

Through the analysis of EGYPTSAT-1 telemetry data, it was confirmed that artificial intelligence learning models are effective in early detection of anomalies in satellites and in predicting potential failures. However, this study was limited to specific telemetry data and only proposed a method for telemetry prediction, without performing an estimation of the satellite’s overall state. Additionally, it used telemetry inputs that are difficult to estimate for future predictions, making it impractical for actual satellite operations. Nevertheless, this study demonstrated the feasibility of artificial intelligence-based future state prediction for satellites and provided a foundational study for long-term operational applications.

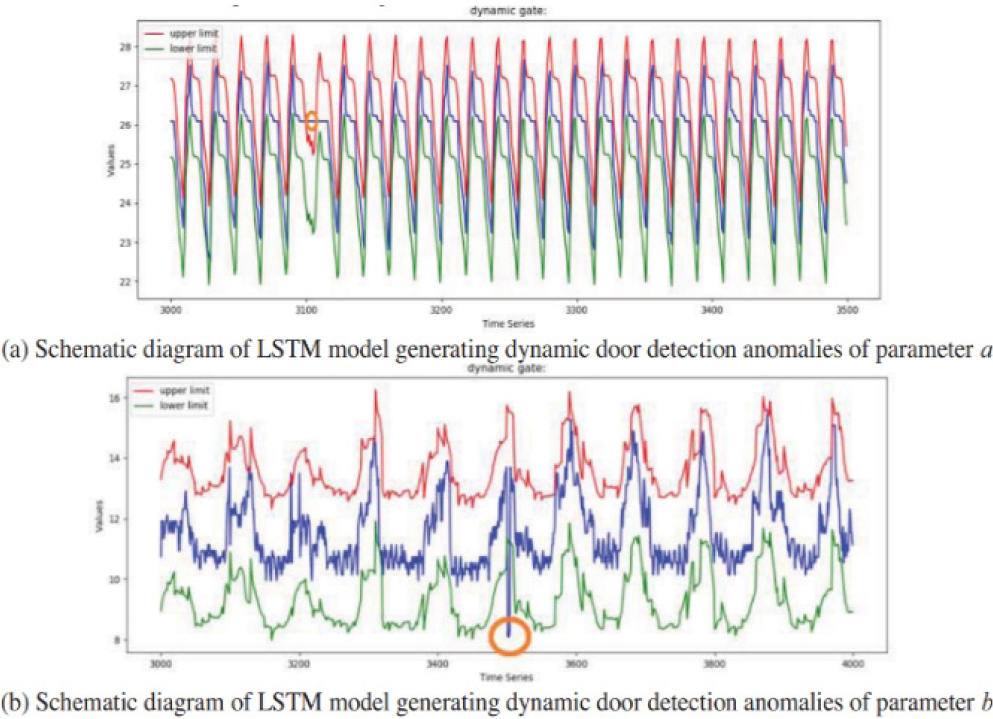

Furthermore, research on fault detection and mission interruption using satellite telemetry has also been conducted [17]. Specifically, LSTM (Long Short-Term Memory)-based models were proposed to detect anomalies in LEO satellite power systems, focusing on early anomaly detection for mission execution, as shown in Fig. 11. To address the limitations of traditional threshold-based anomaly detection methods, a dynamic approach was proposed to process time-series data adaptively.

For this purpose, the study analyzed the satellite telemetry and explored methods for predicting dynamic data states and detecting anomalies using artificial intelligence-based models. However, since the research was aimed at satellite protection during anomalies, it could not be directly applied to mission design.

Therefore, existing studies are not suitable for extending ground mission design. However, with increasing interest in maximizing satellite performance, there is a growing demand for accurate ground-based future state estimation [18].

In conclusion, research aimed at accurately predicting the future state of satellites based on ground-based analysis and at optimizing mission design accordingly is still in its early stages. The necessity for further research and technological development in this field is becoming increasingly important. As satellite operational environments become more complex and mission requirements more diverse, precise state prediction and mission planning based on such predictions are emerging as critical tasks in satellite operations.

In particular, for LEO satellites, where resources are limited and operational conditions are constrained, accurately predicting future states is essential for efficient mission execution. This capability can help reduce resource wastage, improve mission success rates, and support stable satellite operations.

However, current research and technological developments have not yet fully met the demands for stable and efficient satellite operations. Existing approaches primarily rely on simple prediction models or limited datasets, which fail to effectively account for the complex variables and dynamic changes occurring in real-world environments. This limitation increases uncertainty in satellite operations, ultimately hindering optimal mission design and resource utilization.

In particular, the lack of accuracy in continuously monitoring satellite status and predicting future performance increases the risk of mission failure and undermines the overall stability of the system. Given this situation, the development of more sophisticated and reliable future state estimation methods has become a critical challenge that must be urgently addressed in the field of satellite operations.

3. ANALYSIS OF REQUIRED TECHNOLOGIES

Based on the research trends examined above, a precise analysis of power conversion systems, energy storage devices, and LEO satellite attitude prediction modeling is essential for optimizing mission execution and satellite operations. While current studies propose various approaches for satellite mission design, they still face limitations in accurately predicting satellite states and ensuring long-term operational stability.

In particular, to address issues such as power system degradation, state prediction limitations in ground station blackout periods, and inefficient utilization of satellite resources, more reliable dynamic modeling is required.

In this section, we analyze three key elements including power conversion systems, energy storage devices, and LEO satellite attitude prediction modeling based on the discussed research trends. Furthermore, we propose modeling techniques aimed at maximizing the efficiency of satellite operations.

3.1. Power Conversion System Modeling

To complement the shortcomings of existing research and to develop a predictive model applicable for actual satellite operations, the following approach is required.

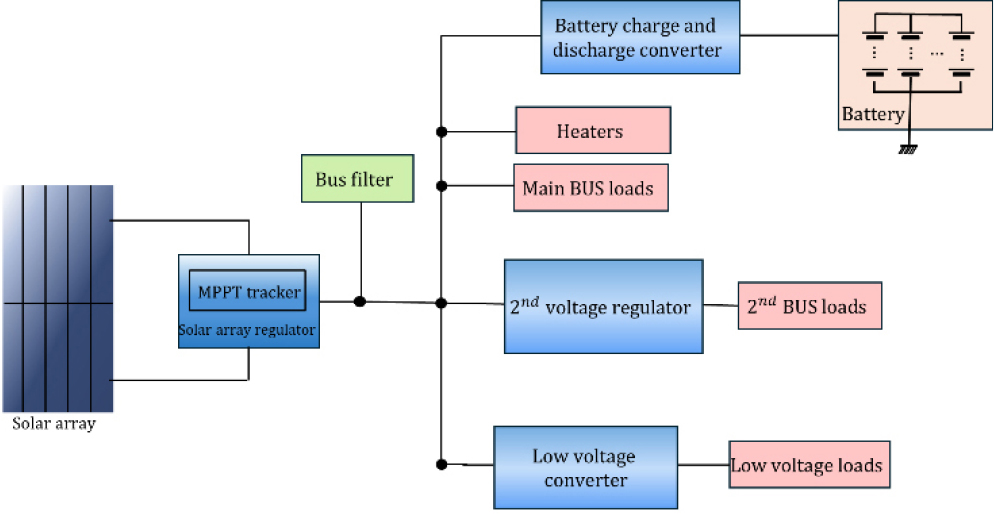

The power system of a LEO satellite is divided into three components: the photovoltaic (PV) system responsible for power generation, the power transformer for power conversion, and the battery system, which compensates for power demand exceeding generation capacity during eclipse periods or peak consumption [19].

First, the solar panel system for power generation varies in output depending on temperature and solar irradiance. Additionally, changes in temperature affect the short-circuit current and open-circuit voltage, leading to variations in the maximum power point. Therefore, each satellite’s PV system must be designed to align with its orbit, expected temperature conditions, and power load requirements [20].

The system is typically designed in a way that the generated power exceeds the consumed power over a single day or one orbital period. The design assumptions include temperature margin errors and worst-case power consumption scenarios to ensure stable operation under extreme conditions [21].

The power generated by the solar panels is converted into primary power through a primary power transformer. The primary power conversion consists of three operational modes: the Maximum Power Point Tracking (MPPT) mode, which estimates the maximum power generation of the solar panels; the Constant Voltage Mode (CVM), which prevents overcharging; and the Direct Energy Transfer (DET) mode, which prevents damage to the converter caused by excessive power generation from the solar panels.

The MPPT mode estimates the maximum power point based on the product of the solar panel’s short-circuit current and open-circuit voltage. In contrast, the CVM mode controls the system by matching the battery voltage with the open-circuit voltage of the solar panels to prevent unnecessary battery charging. The DET mode is activated to handle excess power generation that exceeds the primary power range. This mode is particularly useful in eclipse exit periods when the solar panel temperature drops, potentially generating power beyond the transformer’s design limits.

The output from the primary power conversion is utilized for loads that include its own power conversion, loads with a wide input voltage range, and as an input for secondary power conversion. As a result, the output voltage of the primary power conversion varies depending on the battery’s state of charge, solar panel temperature, power generation levels, and load fluctuations. In satellites without an accurate battery state estimation logic, battery protection design is often simplified by measuring the output voltage of the primary power conversion and setting it within an operational range. Additionally, primary power conversion transformers used in satellites incorporate redundant designs (n of k) to ensure reliability in case of failures.

Next, a secondary power regulation system is included to provide suitable voltage for loads that have a specified input voltage range or lack an independent power regulation system. Depending on the requirements, additional low-voltage transformers may be integrated into the secondary power conversion system. In general satellite systems, standard voltage requirements for loads include +28 V, +15 V, -15 V, +12 V, -12 V, +5 V, and –5 V. The design specifications vary based on the satellite’s units and loads, as well as constraints such as cost, weight, and size. Depending on these constraints, a redundant design strategy, either cold (1 of 2) or n of k, is determined.

Furthermore, to manage power demand exceeding generation during eclipse periods and mission operations, the battery system is designed to ensure a balanced charge-discharge energy cycle, adhere to maximum discharge limits, and maintain an optimal average discharge level over a daily cycle.

In terms of charge-discharge energy balance, the battery is designed so that its state of charge (SOC) at the end of the day is higher than or at least equal to its SOC at the beginning of the day, preventing continuous depletion. The maximum discharge limit and average discharge levels are typically specified by battery manufacturers to ensure performance throughout the satellite’s operational lifespan. Adhering to these constraints is essential to prevent accelerated battery degradation and ensure long-term reliability.

The design of the battery system for power storage is determined based on the necessity and inclusion of charge-discharge control mechanisms. In geostationary satellites and high-performance LEO satellites, dedicated transformers are often integrated to directly regulate charge and discharge currents, enabling active battery protection and management. However, such an approach introduces additional costs, increases system complexity, and adds weight and volume to the satellite.

Furthermore, in the event of critical satellite anomalies, determining the prioritization of protective measures becomes a complex challenge. For instance, in situations involving sudden attitude instability or the need for urgent battery protection, a sophisticated fault management system may be required to determine the appropriate course of action, ensuring both satellite stability and battery longevity.

Therefore, most LEO satellites perform battery charge and discharge operations in coordination with the output of the primary power transformer, as shown in Fig. 12, based on the generated and consumed power. Accordingly, the battery charging current is determined by factors such as the battery’s state of charge (SOC), the temperature of the solar panels, and variations in the solar panel’s sun-pointing angle.

Additionally, the battery discharge current is indirectly determined by factors such as the maximum power point tracking (MPPT) algorithm, eclipse periods based on the satellite’s orbit, load variations due to mission execution, and power generation fluctuations caused by satellite attitude changes. Therefore, for satellites where charge and discharge currents are not directly controlled, predicting these currents is essential for mission feasibility analysis, fault management design, and satellite simulator development.

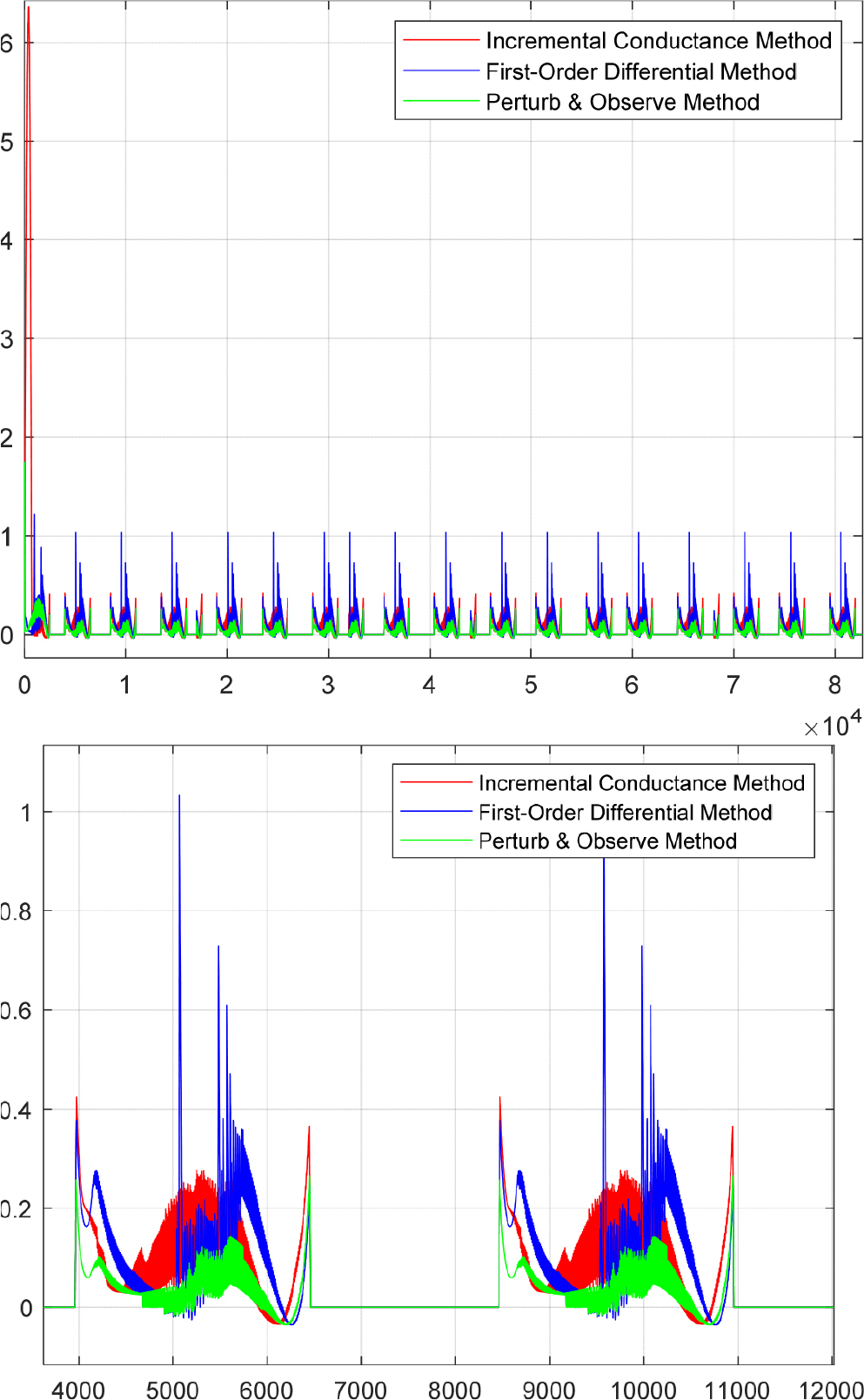

To validate this, recent studies have conducted simulations on solar power regulators implementing constant resistance control and variable amplitude MPPT. The experimental results confirmed the necessity of stable operation and power limitation functions for solar power regulators and highlighted the importance of dynamic resistance control techniques for improving MPPT performance. In satellite environments, where direct control of charge-discharge currents is challenging, accurate current prediction is crucial for mission feasibility analysis, fault management design, and satellite power simulator development. For this, the stable operation and power limitation functions of solar power regulators must be well established, and the accuracy of the MPPT algorithm plays a critical role.

To further verify the need for maximum power point estimation modeling, additional simulations were conducted using solar power regulators that apply constant resistance control and variable amplitude MPPT. The results reaffirmed the necessity of stable solar power regulator operation and power limitation functionality, while also emphasizing the importance of dynamic resistance adjustment techniques for enhancing MPPT performance.

Furthermore, to determine the optimal maximum power point estimation method, a comparative simulation of three different algorithms was performed. The results indicated that the Perturb & Observe method exhibited the lowest average error. Based on this, an optimal power point estimation was conducted under the assumption of a sun-synchronous orbit at an altitude of approximately 580 km and an inclination angle of 97 degrees. The validity of the modeling was verified by analyzing variations in maximum power. The results are presented in Fig. 13 below.

3.2. Energy Storage System Modeling

Generally, a battery cell refers to a fundamental electrochemical unit composed of electrodes and an electrolyte, while a battery is a unit that supplies power by connecting multiple such cells. The characteristics for battery cell modeling and analysis are shown in Table 1.

TABLE 1.

Characteristics of Battery Modeling

Directly modeling such chemical properties electrically presents many challenges [22]. Therefore, electrical modeling of batteries requires the use of circuit representations in voltage schematics and time-domain characteristics. Since batteries exhibit complex electrochemical and nonlinear characteristics, implementing batteries as equivalent circuits is both fundamental and essential for estimating the state of charge (SOC) and state of health (SOH) [23].

The first step in measuring the battery parameters is determining the battery’s capacity. Typically, the Ah-counting method is used for this purpose. Next, it is necessary to measure the battery’s open circuit voltage (OCV), which represents the pure voltage without any circuit resistance. By measuring the battery’s OCV, it is possible to understand characteristics such as charge transfer resistance and the diffusion region.

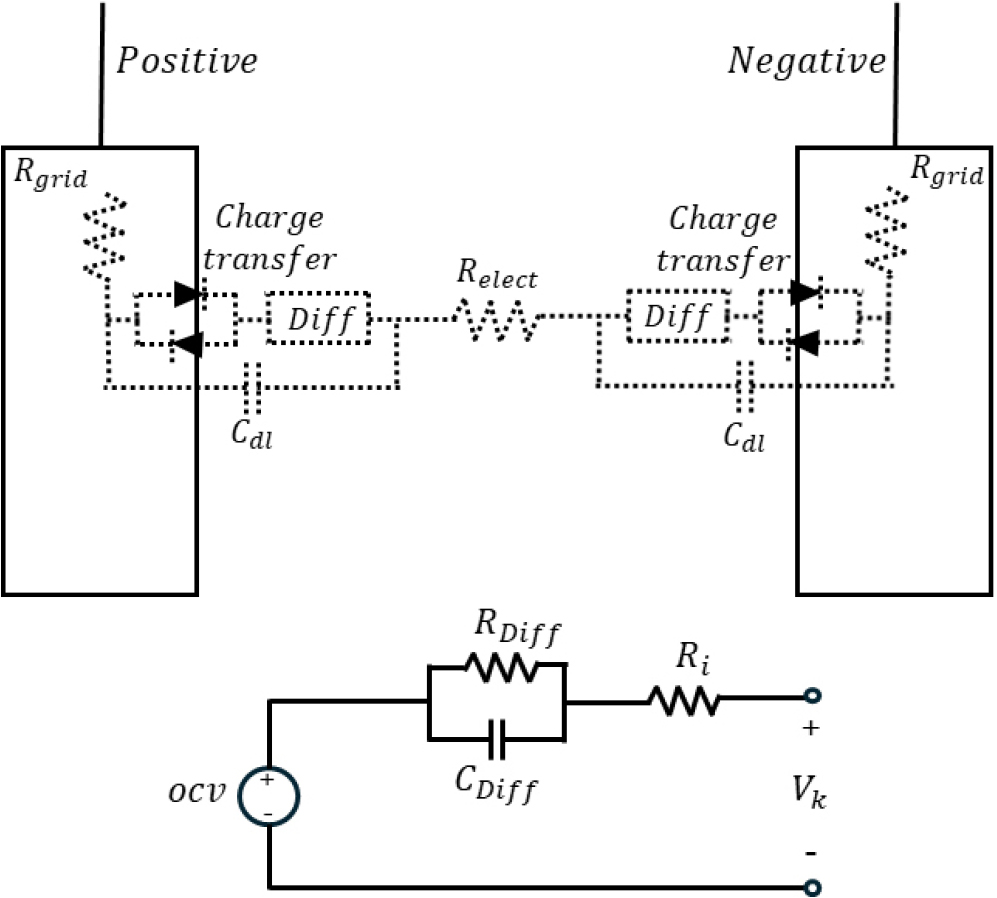

Fig. 14 illustrates the basic battery model or equivalent circuit, where OCV represents the open circuit voltage. R0 denotes the series equivalent resistance, which accounts for the resistance components of the electrolyte and electrode plates. The section consisting of the R1-C1 parallel connection represents the polarization phenomenon that occurs during the charging and discharging process of the battery [24].

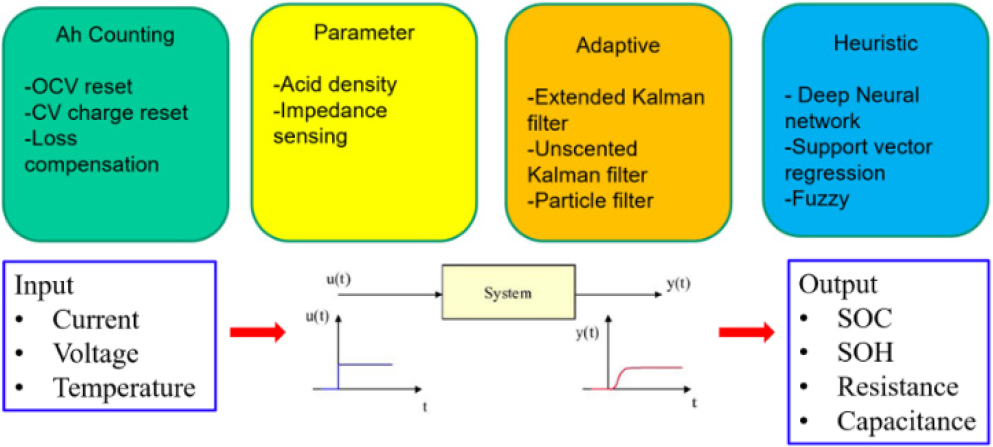

Next, it is necessary to consider methods for estimating the battery state. Fig. 15 illustrates various algorithms for estimating the state of charge (SOC) of a battery. The battery state estimation algorithms can be broadly categorized into the current integration method, parameter measurement method, circuit-based extended Kalman filter, unscented Kalman filter, particle filter, and data-driven heuristic measurement techniques [25]. Among these, the current integration method has low computational complexity but suffers from accumulated errors, necessitating a method for error initialization.

Among these methods, Ah-counting is a simple algorithm which only requires detecting and integrating current. However, since this is a straightforward integration-based algorithm, an incorrect initial value can continuously introduce errors. Additionally, noise and measurement errors occurring during each detection process can accumulate over time, posing a significant drawback.

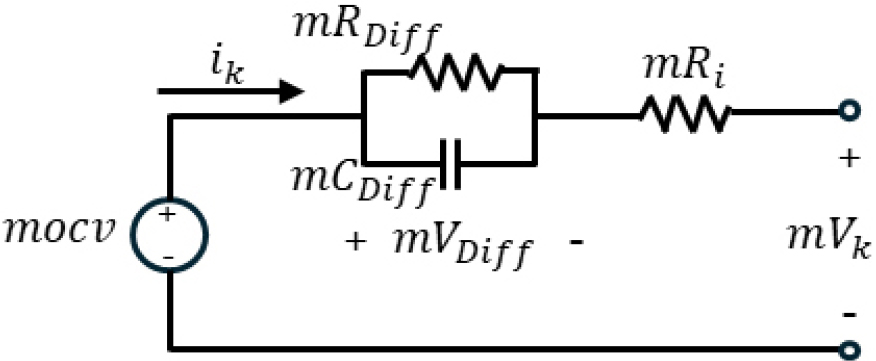

The batteries used in LEO satellites are configured as a package system. As a result, they consist of a combination of series and parallel structures, which makes battery modeling more complex. However, satellite systems cannot be repaired in the event of a failure and must meet their guaranteed lifespan. Therefore, a screening process is often performed to match the capacity and internal resistance of the battery cells [26]. Alternatively, if screening is not conducted to reduce differences in internal parameters, a cell balancing circuit is always included [27]. The cell balancing circuit compensates for differences in charge states between cells caused by variations in internal parameters, providing an effect similar to screening. Consequently, parameter differences between cells in the satellite battery package can be considered negligible, and the battery package, consisting of m series and n parallel connections, can be simplified as shown in Fig. 16[28].

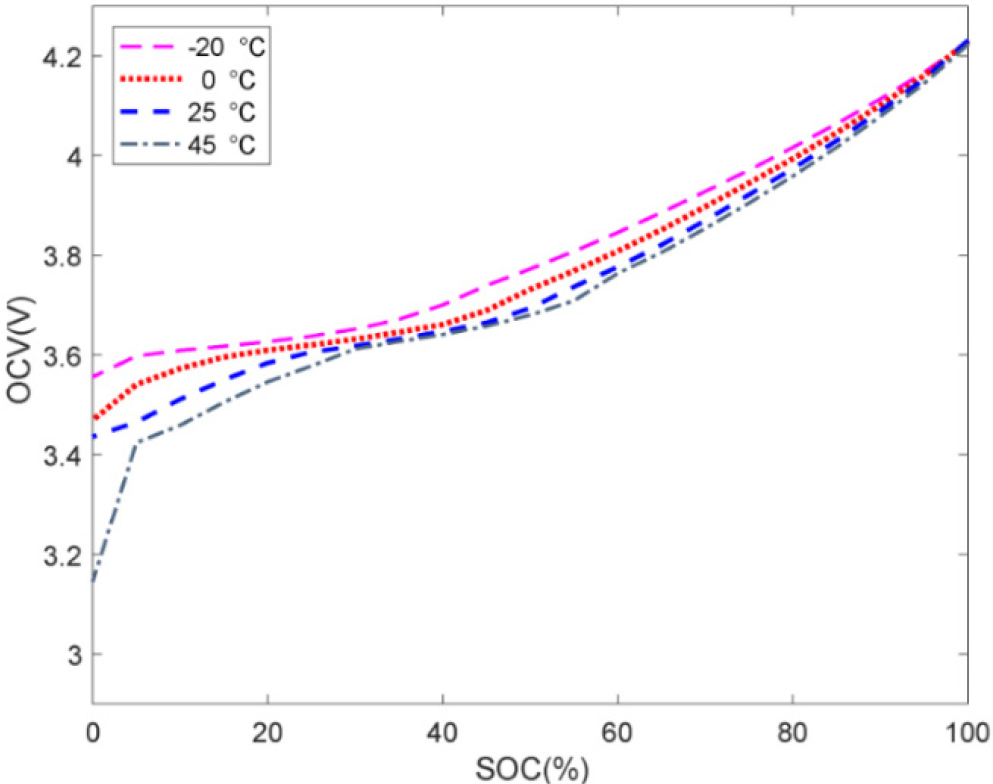

Additionally, the open circuit voltage (OCV) varies with state of charge (SOC) and temperature, as shown in Fig. 17[29]. To use a circuit-based battery state estimation algorithm for satellites, it is necessary to calculate the OCV values considering temperature. In general, for batteries used on the ground, the impact of temperature on battery performance is significant, making the calculation of OCV variations with temperature essential. However, batteries used in low Earth orbit satellite systems are designed to operate reliably in specialized environments. To ensure this stability, active temperature control of the battery is implemented.

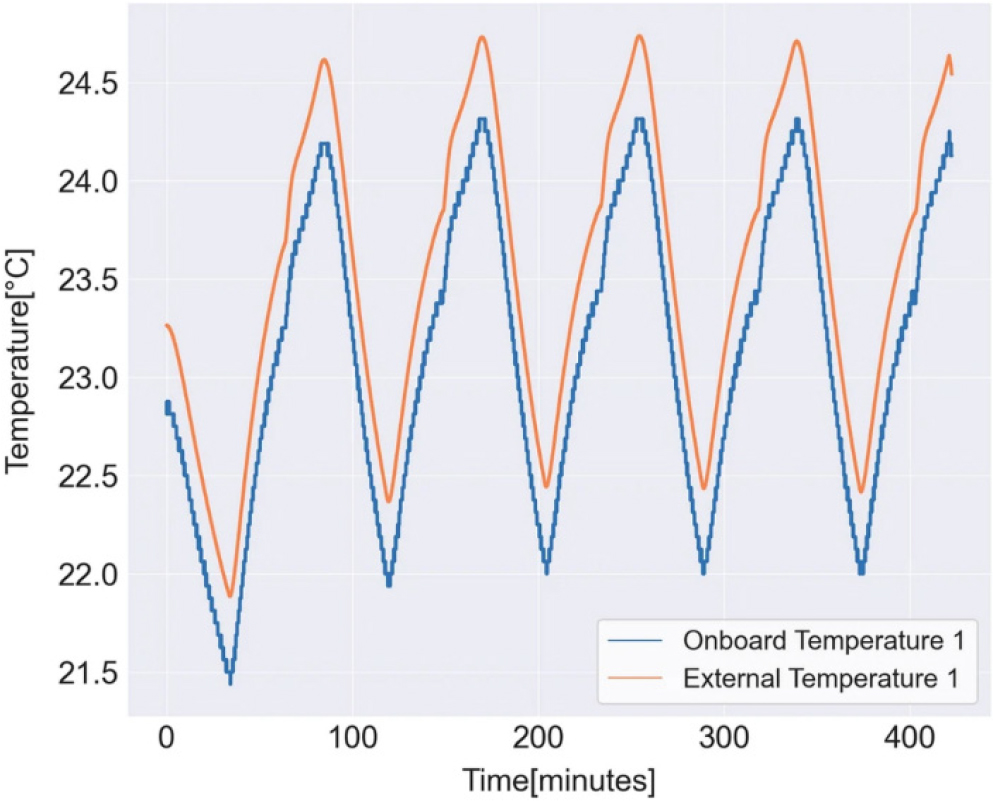

In satellite battery systems, key characteristics such as lifespan and aging are closely related to the operating temperature, so the temperature range is limited and managed to ensure operation under optimal conditions. As shown in Fig. 18, the temperature range of satellite batteries is much narrower compared to ground batteries, meaning the impact of temperature on the OCV calculation results is relatively minimal [30].

As a result, in the satellite environment, temperature changes can be ignored during OCV calculations, and it can be simplified as a function of SOC.

3.3. Low Earth Orbit Satellite Attitude Prediction System Modeling

The attitude maneuvers for performing observation missions of LEO satellites can generally be divided into three stages: the actual observation mission phase, the attitude maneuver phase between missions, and the sun-pointing phase for maximum power generation [31]. Among these, the observation mission phase is used to point the target, and the attitude is generated based on the desired observation points (earth, star, space). Additionally, since the attitude is generally for observation, there is little change in attitude during this phase. As a result, the target pointing phase determines the attitude based on the satellite’s time, position, speed, and the necessary observation points.

Next, the attitude maneuver between target points varies in time depending on the maneuvering method and generation process. Moreover, it depends on the attitude and angular velocity from the previous target phase and the angular velocity and attitude from the next target phase, making it difficult to model easily. Furthermore, various maneuver start and end conditions occur, making it hard to generalize. In general, the primary performance requirement for executing potential missions of LEO satellites is the time spent for maneuvers between mission phases. That is, the more accurately the attitude maneuver time between target point phases is reduced and predicted, the greater the number of possible missions that can be executed.

Finally, after the observation mission is completed, there is a sun-pointing attitude maneuver for maximizing power generation. Therefore, to generate the desired power, the angle with the sun must be controlled within the range defined from the ground. Generally, sun-pointing control is implemented through onboard logic and is designed by considering the power consumption in the LEO satellite and the battery SOC.

To maximize the performance of satellite attitude maneuvers, the maximum values of angular acceleration and angular velocity should typically be used. However, in practice, the maximum angular acceleration and angular velocity of LEO satellites are primarily limited by the actuator’s maximum torque and maximum momentum. Additionally, there may be further limitations depending on factors such as the dynamic range of gyros, the angular velocity for ensuring GPS receiver performance, or the tracing rate of the star tracker. These limitations must be considered when predicting the actual satellite attitude maneuvers.

When predicting the target pointing attitude maneuver profile for observation on orbit, the flight software throughput for related calculations must be considered, and the upload process is performed from the ground. For such uploads, the profile is developed to be simplified while considering the efficiency of transmitted data. Consequently, during the reproduction process on the satellite, errors may occur, and modeling of these errors is necessary.

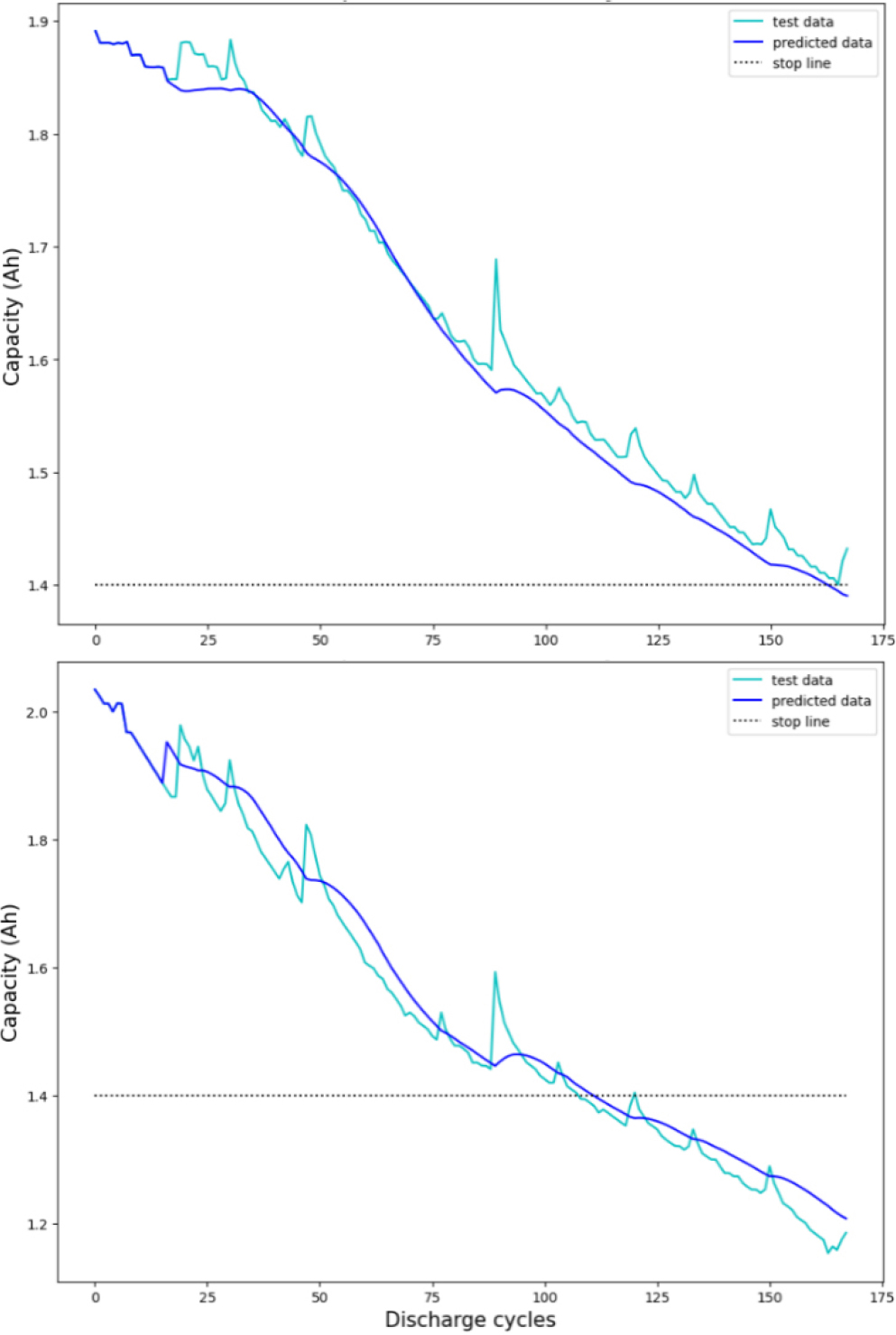

Next, to understand the impact of satellite battery degradation on the estimation of charge and discharge states, the capacity variation was estimated using the current and voltage data obtained from a data-driven model. Typically, when observing the fluctuation of the maximum discharge current, there is no increase in maximum discharge current with the extended operational period, aside from seasonal variations. In contrast, the battery voltage shows a voltage drop over the operational period. This can be attributed to the significant impact of aging elements within the battery itself compared to the aging effects from the load. Therefore, if such variations in battery degradation are not considered, it may result in inaccurate estimation of the actual battery state of charge (SOC) on orbit.

To measure battery degradation, a common method is to apply a specific square discharge current and measure the voltage variation to infer the internal resistance. However, for LEO optical satellites, short bursts of discharge current naturally occur during mission execution. As a result, even without artificially applying a discharge current, degradation can be estimated from the voltage variation data collected during the mission. As degradation progresses, the internal resistance, particularly the series resistance, tends to increase significantly. Thus, it was confirmed that a degradation estimation model based on internal series resistance (RI), utilizing voltage and current data collected during short mission intervals, can effectively predict the battery’s degradation trend.

To verify the reliability of this model, experiments were conducted using NASA datasets (B0005, B0006, B0007). The results showed that, as illustrated in Fig. 19, the average estimation error was within approximately 5%, confirming the accuracy and reliability of the model. Further analysis of the figures also confirmed that the average estimation error of the battery remained within about 5%.

4. CONCLUSION

In this paper, we analyzed key technologies and necessary modeling elements to maximize the efficiency of LEO satellite operations. The conventional ground station-based prediction method has limitations in monitoring satellite status when the satellite is not in contact with the ground station. This results in conservative operations, leading to inefficient resource utilization and increased operational costs. To overcome these challenges, we propose the application of artificial intelligence (AI) and machine learning-based predictive models for anomaly detection in power systems, battery performance degradation prediction, and attitude control optimization.

Specifically, this study emphasizes the importance of modeling power conversion systems, energy storage devices, and attitude prediction systems while discussing how AI-based predictive models can enhance satellite operation performance. By reducing reliance on foreign technologies and developing an independent modeling system, the development timeline of satellite systems can be shortened, and operational costs can be reduced. Furthermore, the study highlights the need to advance power system monitoring, battery state diagnostics, and attitude maneuver optimization using reinforcement learning and time-series analysis techniques.

For future research directions, it is necessary to further refine AI-based predictive models and introduce autonomous optimization techniques for satellite constellation operations to maximize long-term operational efficiency. This will enhance the reliability of satellite operations, improve mission success rates, and optimize global space mission performance. By continuously advancing AI and machine learning-based data analysis techniques, more precise and stable state prediction models are expected to be implemented in future satellite operational environments. Therefore, the modeling methods and technical approaches proposed in this study are anticipated to make significant contributions to the establishment of future satellite design and operational strategies.